In 2016, the world stepped outside.

Phones in hand, millions moved through parks, sidewalks, waterfronts, and city grids chasing something intangible yet deeply compelling—digital creatures layered over physical space. What seemed like a fleeting cultural phenomenon, a gamified exercise in nostalgia and mobility, has quietly become one of the most ambitious data-gathering projects ever realized.

Nearly a decade later, the scale of that invisible infrastructure is staggering. Over 30 billion images, captured by players of Pokémon GO, have been assembled into a vast, high-fidelity, street-level model of the physical world.

This was not a coordinated mapping initiative. There was no explicit global mission, no collective awareness. Instead, it was play—distributed, repetitive, and incentivized—transforming everyday environments into data.

Now, that dataset is being deployed far beyond gaming. It is training robots.

stir

The original promise of Pokémon GO was simple: bring virtual creatures into the real world. But achieving that illusion required more than GPS. It required understanding space—how objects exist, relate, and persist in three dimensions.

Niantic, the company behind the game, began quietly building that understanding through what it called AR mapping tasks. Players were encouraged to scan landmarks, statues, storefronts, and public spaces—often rewarded with in-game bonuses. Over time, these scans accumulated into a dense archive of visual data, captured from countless angles, lighting conditions, and human perspectives.

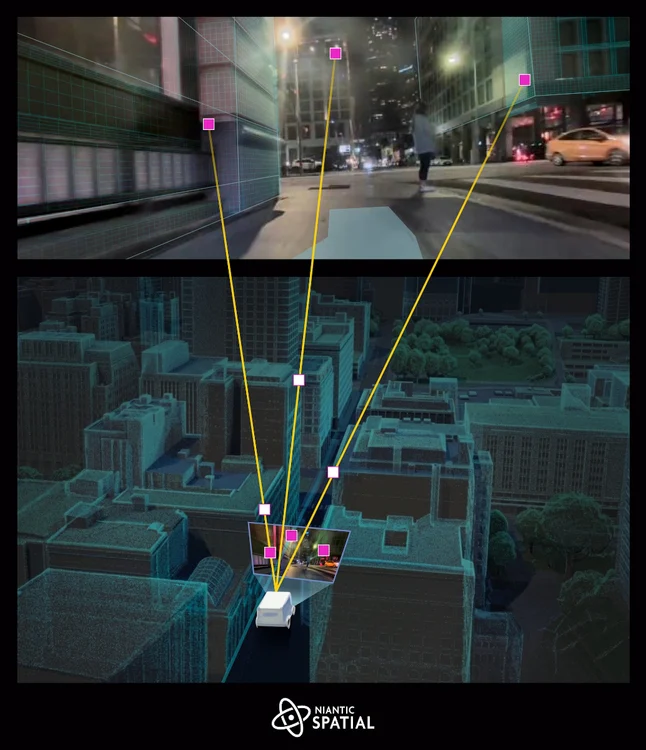

The result is what Niantic Spatial now calls a Large Geospatial Model (LGM)—a system capable of reconstructing the world not just as coordinates, but as a visual, semantic, and spatial reality.

This is a fundamental shift. Traditional maps tell you where things are.

This map understands what things look like.

Your Pokémon GO memories = a trip to GO Fest? 👀✈️

Share your Pokémon GO memory with the hashtags #GOMemories #Sweepstakes and tag @PokemonGoApp for a chance to win a trip for you and a guest to #PokemonGOFest2026!

Learn more: https://t.co/Tcd4lKwoHv

NO PURCHASE NECESSARY.… pic.twitter.com/CjJxWE2T2e

— Pokémon GO (@PokemonGoApp) March 10, 2026

issue

For decades, GPS has been the backbone of navigation. It works well enough for cars, airplanes, and smartphones—until it doesn’t.

Dense urban environments expose its limitations. Signals bounce off buildings. Accuracy degrades. A location might be off by several meters—insignificant for a pedestrian, but catastrophic for a machine attempting precise movement.

For robots, especially those operating in “last-mile” delivery, precision is everything. Being even a few feet off can mean missing a doorstep, colliding with obstacles, or failing entirely.

This is where Niantic’s dataset becomes transformative.

Instead of relying on satellites, the system uses visual positioning—identifying a location based on what the camera sees. Buildings, street signs, textures, and spatial relationships become anchors. The system can reportedly determine position within centimeters, far surpassing traditional GPS accuracy.

In essence, the world itself becomes the map.

show

The leap from augmented reality gaming to robotics may seem unexpected, but the underlying problem is identical.

As Niantic Spatial’s leadership has noted, placing a Pokémon convincingly in the world and guiding a robot safely through it require the same foundational capability: understanding real-world space.

That shared challenge has led to a new application. Niantic Spatial is now partnering with robotics companies like Coco Robotics, using this massive image dataset to train delivery bots navigating city streets.

These robots—already operating in cities like Los Angeles, Chicago, Miami, and Jersey City—rely on the system to localize themselves with extreme precision.

The implication is striking:

Every time a player scanned a PokéStop or captured a landmark, they were contributing to the training data of autonomous machines.

We built maps for people. Now, we’re building a new kind of map for robotics and AI. 🤖📍

Niantic Spatial CEO @JohnHanke shares why this foundation will be as essential to the future of AI as digital maps were for the web.

80% of the global economy operates in the physical… pic.twitter.com/eaQeOduOct— Niantic Spatial 🌎 (@NianticSpatial) February 23, 2026

method

What makes this dataset uniquely powerful is not just its size—it is its perspective.

Most mapping systems rely on aerial imagery or vehicle-mounted sensors. These approaches miss something critical: the human viewpoint. Sidewalk-level detail, occluded spaces, and dynamic urban textures are often invisible to traditional mapping methods.

Pokémon GO solved this unintentionally. Players moved through the world as pedestrians, capturing images from exactly the vantage points that matter most for both humans and robots.

This has produced a map that is:

-

Ground-level rather than top-down

-

Multi-angle rather than singular

-

Continuously updated rather than static

-

Context-rich rather than purely geometric

It is, in many ways, the first human-scale map of the world at global scale.

eco

There is an undeniable tension at the core of this story.

The dataset—arguably one of the most valuable geospatial resources ever created—was built not through paid labor or institutional funding, but through voluntary participation in a game.

Players were not explicitly mapping the world. They were playing.

Yet their actions generated data of immense commercial and technological value. Niantic Spatial is now leveraging that data in enterprise applications, from robotics to AI infrastructure.

This raises a broader question:

Who owns the outputs of collective digital behavior?

The players contributed time, movement, and visual data. The company owns the platform, the aggregation, and the model. The resulting system becomes a product—one that can be licensed, deployed, and monetized.

It is a familiar pattern in the digital age, but rarely has it been so physically grounded.

grad

The 30 billion-image dataset is not just a map—it is the foundation for a new category of artificial intelligence.

Large language models understand text.

Image models understand pictures.

Geospatial models like Niantic’s aim to understand the world itself.

This includes:

-

Geometry: the shape and structure of environments

-

Semantics: identifying objects like buildings, roads, and trees

-

Context: understanding how spaces are used and navigated

The goal is what some describe as spatial intelligence—AI that can interpret and interact with physical environments as naturally as humans do.

This has implications far beyond delivery robots.

Autonomous vehicles, augmented reality glasses, urban planning systems, and even defense technologies could all benefit from such models. The same dataset that helps a robot deliver food could one day guide machines in entirely different domains.

safeguard

Niantic has maintained that players were informed when contributing AR data, typically through in-app prompts and opt-in features.

But the scale and downstream use of that data introduce more complex questions.

Many players likely did not anticipate that their gameplay would contribute to a global mapping infrastructure used in robotics and AI. The transformation of entertainment data into industrial technology blurs the boundary between participation and production.

It also highlights a shift in how data is generated:

-

Not through deliberate capture

-

But through behavioral byproducts

Walking, scanning, exploring—these actions become inputs into systems far removed from their original context.

move

What makes this story particularly compelling is its timeline.

Unlike traditional data projects, which are often structured and time-bound, this dataset emerged organically over nearly ten years. It evolved alongside the game itself, expanding as new features encouraged more scanning and interaction.

At its peak, Pokémon GO had hundreds of millions of players, with tens of millions still active today.

This sustained engagement allowed for:

-

Repeated scans of the same locations

-

Coverage across diverse geographies

-

Data captured under varying conditions

The result is not just scale, but depth—a layered understanding of the world that improves with redundancy and variation.

culture

Perhaps the most unexpected aspect of this story is its cultural resonance.

Pokémon GO was often framed as a fleeting trend—a moment of collective enthusiasm that briefly reshaped public space. But its legacy is proving far more enduring.

What began as a game has become:

-

A global mapping system

-

A training dataset for AI

-

A foundation for robotics infrastructure

It is a reminder that digital culture does not simply disappear. It accumulates, transforms, and reemerges in new forms.

The act of play—so often dismissed as trivial—can produce systems of profound consequence.

particip

In retrospect, the players of Pokémon GO were not just participants in a game. They were contributors to a distributed sensing network, capturing the world in unprecedented detail.

They mapped streets without calling it mapping.

They generated data without intending to.

They built infrastructure without realizing it.

This reframing challenges traditional notions of authorship and labor in the digital age. It suggests that participation itself—movement, interaction, engagement—can become a form of production.

And as technology continues to evolve, this pattern is likely to repeat.

fwd

Today, a delivery robot navigating a city sidewalk may rely on data captured years earlier by someone chasing a virtual creature.

The connection is invisible, but the system is real.

It reflects a broader shift toward ambient intelligence—systems that operate quietly in the background, built from layers of accumulated data and interaction.

These systems are not always designed explicitly. Sometimes, they emerge.

clue

The story of Pokémon GO’s 30 billion-image dataset is not just about technology. It is about how systems are built—often unintentionally—through collective behavior.

It is about the transformation of play into infrastructure.

Of images into intelligence.

Of movement into maps.

Most of all, it is about a subtle but profound realization:

The digital world is no longer separate from the physical one.

It is constructed from it.

And sometimes, without knowing it, we are the ones building it.